Tuesday, 6 February 2018

New blog location

My new site can be found at http://www.adamstorr.co.uk

Please update your feeds so you don't miss out on all the new content!

Cheers

Tuesday, 22 July 2014

Enums in C#; Doing More Than You Thought!

I have been developing for a while now and use Enums on a daily basis (nearly) and was quite happy in my understanding an Enum definition had a set number of values and of those values they could be cast to the related integer value (or another under-lying type) and back again.

And then I saw the following piece of code (condensed down for example):

System.Net.HttpStatusCode value = (System.Net.HttpStatusCode)429;

var result = (429 == (int)value);

There is no corresponding value in System.Net.HttpStatusCode which relates to 429 and the value of result variable was true when when the enum value was cast to an int!

So why is this possible? Enter the C# specification!

Quick side note; no need to search the internet for the C# language specification if you have Visual Studio 2013 installed locally you already have it. It can be found:

C:\Program Files (x86)\Microsoft Visual Studio 12.0\VC#\Specifications\1033

A quick browse to section 1.10 Enums answered, high level, why it is possible straight away.

“The set of values that an enum type can take on is not limited by its enum members.”

The rest of the section is quite interesting as well with regards to the underlying type of the enum type. On further reading of chapter 14 – Enums there are a lot of bits which as a developer you take for granted and use without really thinking it about. It’s actually quite interesting.

Makes me wonder what else I’m missing out on, maybe I should read more of the specification? Maybe the whole specification?

Thursday, 6 February 2014

Updated Json.Net and now OWIN errors

I took the step on an Asp.Net MVC 5 application last night to update all of the nuget packages in the entire application to be the latest. The main reason for this was I added SignlR to the solution and it required a newer version of OWIN which is fine. With the update of all packages came the update to Json.Net to the newly released version 6 and on fire up of the application I got the following error:

Could not load file or assembly 'Newtonsoft.Json, Version=4.5.0.0, Culture=neutral, PublicKeyToken=30ad4fe6b2a6aeed' or one of its dependencies. The located assembly's manifest definition does not match the assembly reference. (Exception from HRESULT: 0x80131040)

Using the information in the error message I added the following snippet into the runtime element in the web.config to resolve the issue:

<dependentAssembly>

<assemblyIdentity name="Newtonsoft.Json" publicKeyToken="30ad4fe6b2a6aeed" culture="neutral" />

<bindingRedirect oldVersion="0.0.0.0-4.5.0.0" newVersion="6.0.0.0" />

</dependentAssembly>

I think nuget is a great concept but I still think there is a bit of an issue when it comes to multi packages which rely on a specific package which then is updated later if you update all. In the future I will be more selective on which packages to update.

Wednesday, 22 January 2014

RavenDB Import / Export in code

The recommended way of doing backups automatically is using Smuggler on the server in a scheduled job or if you do manual backups and restores it’s through the RavenDB management studio but what happens if you’ve not got the ability to do either of those? It’s time to build in import/export functionality into your application.

This was a requirement that I was looking at a while back and then when I did some further investigation more recently I had found a thread on the RavenDB Google group and the associated pull request to allow for smuggling functionality without the http server running; problem solved! So how can it be used?

The changes that were made extracted out an interface ISmugglerApi to allow for a common mechanism for both the server/http version and the internal embedded implementations.

So how do we backup? This is an implementation from an MVC application perspective. Context is a way to determine the difference in implementation if you require to develop and/or deploy onto the different platforms.

public async Task<ActionResult> Backup()

{

SmugglerOptions smugglerOptions = new SmugglerOptions { BackupPath = Server.MapPath(ServerMapPath) };

switch (Context)

{

case Context.Embedded:

DataDumper dumper = new DataDumper(((EmbeddableDocumentStore)MvcApplication.Store).DocumentDatabase, smugglerOptions);

var embeddedExport = dumper.ExportData(null, smugglerOptions, false);

await embeddedExport;

break;

case Context.Server:

var connectionStringOptions = new RavenConnectionStringOptions

{

ApiKey = “insert ApiKey”,

DefaultDatabase = “db_name,

Url = “http://localhost:8080”

};

var smugglerApi = new SmugglerApi(smugglerOptions, connectionStringOptions);

var serverExport = smugglerApi.ExportData(null, smugglerOptions, false);

await serverExport;

break;

}

return File(Server.MapPath(ServerMapPath), "application/binary", "backup.dump");

}

For the embedded version you specify the mapped server path on the local machine at the location the server will write the file to, initiate a new instance of the DataDumper class and call ExportData. There is no need for a stream or to get the incremental flag for this basic usage.

The hosted server version you have to specify they api key, database name and the url of the server to use. These are all bit of information you should know if you or can get access to if you are developing in this way. Once this is established you can call ExportData in the same way as the DataDumper call.

Restoring the backed up file is very similar but in reverse.

public async Task<ActionResult> Restore()

{

SmugglerOptions smugglerOptions = new SmugglerOptions { BackupPath = Server.MapPath(ServerMapPath) };

switch (Context)

{

case Context.Embedded:

DataDumper dumper = new DataDumper(((EmbeddableDocumentStore)MvcApplication.Store).DocumentDatabase, smugglerOptions);

var embeddedImport = dumper.ImportData(smugglerOptions);

await embeddedImport;

break;

case Context.Server:

var connectionStringOptions = new RavenConnectionStringOptions

{

ApiKey = “insert ApiKey”,

DefaultDatabase = “db_name”,

Url = “http://localhost:8080”

};

var smugglerApi = new SmugglerApi(smugglerOptions, connectionStringOptions);

var serverImport = smugglerApi.ImportData(smugglerOptions);

await serverImport;

break;

}

return View("Index");

}

There are many other options which can be used but the above is one of the simplest implementations.

When I was doing some research on how to use this mechanism there were no examples I could find in the blogosphere however reading the the code and looking at the unit tests of the RavenDB source it was enough of an example to work with. I’d highly recommend reading the unit tests and the source of open source if you are struggling to find examples of how to use it; it is good documentation especially if the mechanism you are trying to use is used in the software itself.

Thursday, 29 August 2013

Feeling overwhelmed and like a phony? Chill out!

Do you ever feel overwhelmed by the amount of new technology which is coming out all the time? The new framework to do this, and the new improved way of doing that. The new blog posts about this and that, the never ending Pluralsight courses by John Sonmez? It can be too much!

Recently I’ve been feeling this way as well as losing the motivation I use to have to code. I’ve not lost the joy of coding or problem solving but the combination of being overwhelmed by all the bleeding edge technology and information coming out of the developer community and in contrast the recurring day to day coding on brownfield code bases running VS2010 (and every so often VS2005) can get you down.

Recently I’ve got to a point in my life/career where I feel like a bit of a phoney. I’ve worked very hard to get where I am today and at only 30 years old I potentially still get seen by some as the opinionated upstart when I’m not. I know I have a lot more to learn but I think everyone does whether that’s new processes in your day to day life, understanding ways of doing tasks in new positions etc. You should always be learning and adapting.

The realisation of trying to learn everything is just not practical. Reading code is a great way to learn new techniques which you can apply to your day to day developer life but learning the ins and outs of every framework is just not possible. The other aspect of learning is you can run through a Pluralsight course on this or that but if you’re not using it every day all day at work or at least a couple of hours in the evenings each week on a personal project you’ll very quickly forget it. This, I think, is my main frustration.

So as I see it there are a couple of ways to solve this issue which range from minor to life changing. You can ignore all new technology, carry on with your day to day life, not do anything outside of work; essentially put your head in the sand. This will however leave your skills becoming slowly stagnant and make future career progression hard. On the complete other end of the scale you can move jobs, go and work for a company where they are using the latest and greatest, always starting new greenfield projects, this will help improve your skill set and keep it up to date but that will impact your life completely which isn’t always the answer either. Changing jobs, whether forced through redundancy or by choice, is a big change!

So what can you do? Learn every 3rd new technology which appears on the Pluralsight new course listing? This isn’t practical. Maybe try and influence technology at work? Spend time during the day (lunch time, 30mins before heading home) reviewing new technology? Both of these are possible but will depend on personal situation.

I’ve been thinking over this conundrum recently, and the near future birth of my first child has helped my thinking on this subject, the biggest issue is I’ve been putting too much pressure on myself. Too much pressure to learn new technologies, too much pressure to read every blog post about every technology. Also when I do look at a technology and write pet/personal projects such thoughts as “it needs to be production ready code straight away”, “you need to get it done now”, “it needs to be perfect first time” aren’t the thoughts which will aid motivation but in fact demotivate.

So if you find yourself in a similar situation as I have recently and don’t know what the answer is then use the realisation that has only hit home to me recently; chill out! Don’t put yourself under unrequired pressure. Yes, continue to read blog posts. Yes, continue to play with new technologies as/when you can … just don’t pressure yourself into having to do it all the time as it can be harmful as well as beneficial.

Thursday, 18 July 2013

Make your code readable by making it flow with Generics

I’ve seen a couple of examples of code recently which basically have a method which work primarily on a base class but the resultant will always be cast to a concrete implementation. This can make the code a bit clunky as each caller has to cast the resultant to the type it’s expecting. Let me show you want I mean:

public abstract class Base

{

public string Comment { get; set; }

}

public class Concrete : Base

{

}

public class Update

{

public static Base AddComment(string message)

{

// the type created can be determined by some other means eg db call etc.

var response = new Concrete();

response.Comment = message;

return response;

}

}

And then when it is called it needs to cast to the required type:

var concrete = (Concrete) Update.AddComment("update the comment");

This code snippet doesn’t read that badly however once the system gets bigger or this technique is used for more complicated types/systems then it can start to look clunky. As you know what types you’ll be working with and what you will expect returned when calling the code then you can make the code clearer and flow by using generics.

public class Update

{

private static Base AddComment(string message)

{

// the type created can be determined by some other means eg db call etc.

var response = new Concrete();

response.Comment = message;

return response;

}

public static T AddComment<T>(string message) where T : Base

{

// in this example the call is into the original method

// but this does not have to be the case

return (T) AddComment(message);

}

}

Notice I’ve made the original AddComment method private as I don’t want to allow any callers access to it directly. The example calls into the original private method but this does not have to be the case.

Now when the same functionality is called in the main program flow it looks like this:

var concrete = Update.AddComment<Concrete>("update the concrete");

Updating the API in this way makes the calling code clearer to read and makes the API signature developer friendly so a potential cast is not missed out.

Sunday, 19 May 2013

Asp.net MVC Output Caching During Development

During some Asp.Net MVC development for a public website recently I have been looking at caching. The content of the site has minimal churn so I looked to use OutputCache. This works fine once it’s been finished and released. However it causes frustrations during development when you want to change something, compile, refresh and nothing happens.

One option is to comment out the OutputCache attribute, either on each action or on the specific controller, during development but that seems a bit crazy and isn’t sustainable. So what can we do about this?

A Preprocessor Directive is one potential answer; especially the #if and using DEBUG value for example:

#if !DEBUG

[OutputCache(Location = OutputCacheLocation.Any, Duration = 36000)]

#endif

public class HomeController : Controller

{

public ActionResult Index()

{

return View();

}

Now when you build in debug mode it will disable the caching and then once you’re happy and ready to release, change the build to “release” and it will be included ready to deploy to the production environment.

Tuesday, 7 May 2013

Tournament – Results and Calculating Scores with RavenDB Indexes

This is the 4th part of a series that is following my progress writing a sporting tournament results website. The previous posts in this series can be found at:

- Part 1 – My Journey with Asp.Net MVC and RavenDB – Introduction to Tournament

- Part 2 – Tournament – Project Setup

- Part 3 – Tournament – Learning io CRUD

In the previous post I explained the basic CRUD operations and interesting routing issues which I found to get the setup I was looking for.

What do I want to achieve?

By the end of this post I will have shown you how I have started the process off of saving match data, how I have chosen a jQuery plugin to aid with the user interface when entering results and my first run at a calculated index to calculate the scores of the matches so far.

Match up details

The arguably most important part of a result stats website is recording the match ups between players and the outcomes. Once this is done the accumulation of the result scores can be implemented. How they are linked to Events is a small issue compared to recording the actual results.

Let’s start with the first part and see how it goes.

So let’s take a look at some of the potential scenarios which could happen and how we will deal with them.

- Single and Doubles match ups with home and away players

- Players could play for the other team due to number match ups

- Players could move club between events

- Ringers could play for any club at any time

To address these issues for each match up there needs to a be a collection of home players and away players. These will be the player records at the point in time at which the match up takes place; the assumption here is the match details won’t be changed after any player changes are made for future events. As we’re using a document database for this and the root is the match then each match will have an instance of the player record at that time point when it occurred.

public class Match : RootAggregate

{

// todo: link to leg

public Classification Classification { get; set; }

public Result Result { get; set; }

public Team WinningTeam { get; set; }

public Team HomeTeam { get; set; }

public Team AwayTeam { get; set; }

public ICollection<Player> HomePlayers { get; set; }

public ICollection<Player> AwayPlayers { get; set; }

public ICollection<Comment> Comments { get; set; }

}

As we can see above we also want a reference to both the home and away teams as well as the winning team. With these details by themselves it would work fine and store the data as required. I have decided to include a Classification enum to make it easier to distinguish between Singles or Doubles match ups (the doubles classification will also be used for 3 on 2 match ups). Due to the issue of 3 on 2 scenarios I’ve kept the players as collections and not explicitly player 1 and player 2. In addition to this I’ve added in an associated “Result” enum value.

public enum Result

{

Incomplete = 0,

Draw,

HomeWin,

AwayWin

}

Having this result value will aid with determining the scores without having to do comparisons between different properties (and potential sub properties) with the aim to make the score index calculations cleaner to read.

Chosen

Chosen is a jQuery plugin to aid with making dropdowns and multi selects more user friendly. I first came across this about 12 months back when it was introduced at work to aid with large dropdown selectors. I decided to use this because I would be selecting individual teams from dropdowns but mainly I would be selecting multiple players from a multi select form input and wanted to make it keyboard friendly to speed up result entry.

As you can see from the small screen shot above it aids with single selection and makes the multi select look cleaner by hiding the “noise” around a default vanilla multi select input form control.

I’m not completely sold this will be the final solution for this issue however it performs as required for now. When I get to working on the UI then I will decide if Chosen is the best way to go or to find an alternative solution.

Score calculations

I had read multiple blog entries on how to do a Map / Reduce index in Raven DB. The general concept of this is to map from a document collection into a simple form with a counter set to 1 which can then be collated and grouped on a property and the counter value can be aggregated to get the full result. This would be fine however I want to count Wins, Losses, Draws and eventually Extras per player in one index. I knew there had to be a way but what was it?

This is when I discovered AbstractMultiMapIndexCreationTask. It works in a similar way to the more basic AbstractIndexCreationTask<T> however instead of having a specific type it “maps” - as defined by T – it allows for a generic method called AddMap<T>. AddMap allows the document type to be defined and then to map into a separate result type (as per the AbstractIndexCreationTask) however you can call this as many times as you like (although I’d imagine there was a limit somewhere either implementation wise or coding standards wise) on any number of document collection types.

Using the AddMap method I added in a “map” for Home wins / losses, Away wins / losses and Draws irrespective of if the player was playing at home or away. I will look to build on this in the future to do more stats for points such as “performs better on home soil” type scenarios.

AddMap<Match>(matches => from match in matches

from player in match.HomePlayers.Select(x => x)

where match.Result == Enumerations.Result.HomeWin

select new Result

{

PlayerId = player.Id,

Wins = 1,

Losses = 0,

Draws = 0

}

);

Above is one of the AddMap calls. Looking at this you can see how we can determine, using a combination of HomePlayers and Result value, that the home players have a win. After another internal code review I have noticed that I didn’t need to do the Select(x => x) however this was left over from earlier development when I was looking at extracting out just the player id and will be updated.

I now had the “mapped” part of the implementation. At this point I have a collection of Result objects with each entry being the result of an individual match per player in that match.

The Reduce part is a simple aggregation using the PlayerId as the group key. This can be easily established using the code below.

Reduce = results => from result in results

group result by result.PlayerId

into r

select new Result

{

PlayerId = r.Key,

Wins = r.Sum(x => x.Wins),

Losses = r.Sum(x => x.Losses),

Draws = r.Sum(x => x.Draws)

};

This will give you a result record per player, who has played at least one match, and their “record” for all events.

At this point it got me the data I wanted but I also wanted to display the player information as well as the results. I first started off looking to do a “join” between the index results and a Player query although I realised I was still thinking too “relational” and needed to find another cleaner option; enter TransformResults.

TransformResults is another function on an index which you can access much like AddMap and Reduce. The tooltip description is a bit cryptic “The result translator definition” but if you allow Visual Studio to work its magic to set out the signature it makes a bit more sense …

TransformResults = (database, results) =>

What this gives you is a delegate in the form of a function expression which takes in IClientSideDatabase and the IEnumerable<T> of your results set which is the outcome of the Reduce we did earlier. The IClientSideDatabase interface, and it’s associated implementation which gets passed in by RavenDB at run time, is purely a lightweight data accessor. As per the documentation the methods are used purely for loading documents during result transformations; perfect! Using this mechanism I was able to Load the player record for each of the result values to be able to access the additional information.

TransformResults = (database, results) => from result in results

let player = database.Load<Player>(result.PlayerId)

select new Result

{

PlayerId = result.PlayerId,

Player = player,

Wins = result.Wins,

Losses = result.Losses,

Draws = result.Draws

};

Conclusion

In this post I have gone over how I’ve decided to model match information, used a jQuery plugin called Chosen to aid with the user experience when adding / editing a match data and how I used a AbstractMultiMapIndexCreationTask derived index in Raven DB to calculate the scores for each player, returning their scores along with their player data.

As always you can follow the progress of the project on GitHub. Any pointers, comments, suggestions please let me know via Twitter or leave a comment on this blog.

Friday, 26 April 2013

Don’t reinvent the wheel; use the tools provided

Every so often I read a blog post and most of the time it’s interesting and the ideas it’s trying to portray are good however it’s the small snippets of code in them which can start to annoy me. It’s usually the little things such as re-writing functions which the .net framework will do for you.

One of the biggest culprits is when you have an array of strings, usually role names in user management, and you want a single comma separated string of them. I’ve seen for loops, for each loops, …. don’t think I’ve seen a while implementation yet. I’ve seen variations on a theme for the internal loop logic as well but still you get the idea and you end up with something like:

var roles = string.Empty;

for (var index = 0; index < Roles.Length; index++)

{

roles += Roles[index];

if (index != Roles.Length - 1)

{

roles += ",";

}

}

return roles;

Now, it’s syntactically correct and yes it does what is required and yes this is a personal frustration of mine so I’m not pointing to anyone specifically but unless you’re doing something else in the code why not use string.Join and reduce it to …

return string.Join(",", Roles);

If we can start blogging code which harnesses the power of the frameworks we’re using then hopefully we can pass on this experience and techniques to newer developers and we can start to not reinvent the wheel, write more robust code and concentrate on the fun stuff.

Wednesday, 24 April 2013

Tournament – Learning to CRUD

This is the 3rd part of a series that is following my progress writing a sporting tournament results website. The previous posts in this series can be found at:

- Part 1 – My Journey with Asp.Net MVC and RavenDB – Introduction to Tournament

- Part 2 – Tournament – Project Setup

In the previous post I explained the setup that I will be using for my project. In this post I will go through the basic CRUD operations and interesting routing issues which I found to get the setup I was looking for.

What do I want to achieve?

By the end of this post I will show you how I have managed to sort out the CRUD operations for a simple Entity object including working with RavenDB to get auto incrementing identifiers. I will also describe the tweaks to the default routing definitions to allow for the url pattern I want to continue to work with for the public urls.

Should your Entity be your ViewModel?

After my post the other day about your view driving data structure I decided to put in ViewModel / Entity mappings after originally writing the CRUD setup straight onto both my Team and Player entities. I didn’t like the fact that there were DataAnnotations in my data model so I refactored it to use ViewModels instead to avoid this “clutter” and keep the separation between View and Data. To aid in the mapping between the two types I Nuget’ed down AutoMapper.

To keep with the default pattern of everything which is required by the application being setup in Global.ascx.cs in Application_Start but done through calling static methods into specific function classes in App_Start I created an AutoMapperConfig class with a static register method (see below).

public class AutoMapperConfig

{

public static void RegisterMappings()

{

// Team

Mapper.CreateMap<Team, TeamViewModel>();

Mapper.CreateMap<TeamViewModel, Team>();

// Location

Mapper.CreateMap<Location, LocationViewModel>();

Mapper.CreateMap<LocationViewModel, Location>();

// Player

Mapper.CreateMap<Player, PlayerViewModel>();

Mapper.CreateMap<PlayerViewModel, Player>();

}

}

With doing this I can keep all my mappings in a single place and it won’t clutter the Global.ascx.cs with unnecessary mess.

Auto incrementing Ids

The default setup for storing documents in RavenDB is using a HiLow algorithm. However this does not give the auto incrementing id functionality I’d like. Why will this not do? Well the id makes up part of the url route linking to the individual entity and I want to keep that as sequential and not dot about. The default ID creation would be fine for Ids which aren’t exposed to the public but they will be in this application.

Thanks to the pointers from Itamar and Kim on Twitter I was able to achieve the desired effect without much trouble at all. In the create method on the TeamController when storing the data I had to call a different override to the Store method and specify the collection part of the identifier to use.

RavenSession.Store(team, "teams/");

And that was it.

Routing setup

There was two issues when it came to routing; accessing the record for admin functions and accessing the details of a specific entity to keep with the routing in the current site. Default RavenDB ids are made up of the collection name of the document and the id eg “teams/2”. To get Asp.net MVC to understand these the controller actions which accept an id need to be changed from int to string

public ActionResult Edit(string id) { .. }

And the default route gets updated as follows:

routes.MapRoute(

name: "Default",

url: "{controller}/{action}/{*id}",

defaults: new { controller = "Home", action = "Index", id = UrlParameter.Optional }

);

On a brief look it might not look like anything has changed however the {id} part of the url has been updated to {*id} to allow for anything before the id part – this will allow the Raven DB default collection based indicators to match eg. teams/2

The next part was the public routes to see the details of the different entities. Let me explain this a bit further. The default url to access teams is /team and for a specific team I want to have a url which follows the url pattern of /team/{id}/{slug} eg /team/1/team-name-slug-here. This is to partially aid with SEO but also make the urls more human readable when posted on forums, twitter etc.

This can be established using the following route:

routes.MapRoute(

name: "EntityDetail",

url: "{controller}/{id}/{slug}",

defaults: new { action = "detail", slug = UrlParameter.Optional },

constraints: new { id = @"^[0-9]+$" }

);

The constraint is to limit the matching to integer values only – remember routes match from specific to general. We want to limit the restriction to just this url pattern only.

The next hurdle to over come was the issue with the raven DB identifier which includes the collection name (eg teams/2 or players/5), all I want is the integer part to make up the url and hence match the url route pattern which we defined above. To do this I wrote a small extension method which works on a string. It’s very basic, see below.

public static string Id(this string ravenId)

{

var split = ravenId.Split('/');

return (split.Count() == 2) ? split[1] : split[0];

}

This will allow me to reference the identifier properties on my view models however be able to access the integer part of it to setup the route for the entity specific none admin views. So how do we use this?

<viewModel>.Id.Id()

Conclusion

In this post I have shown how to setup the basic mappings between entities and view models. In addition to this I have shown how I made Raven DB play nice with sequential incrementing identifiers. I have also discussed the current routing requirements and how to extract out the integer identifier part of a Raven DB collection identifier to be able to set these as required.

In the next post I will briefly discuss how I am planning on saving match results and take a look at the first attempt at using indexes in Raven DB to calculate each players results.

As always you can follow the progress of the project on GitHub. Any pointers, comments, suggestions please let me know via Twitter or leave a comment on this blog.

Monday, 22 April 2013

Tournament – Project Setup

For the first few iterations of this project it will be based in a single Asp.Net MVC 4 Internet Web Application. This can be found through the regular File > New process in Visual Studio. I may at some point decide to break it up into different projects but at the moment I don’t see an immediate need and don’t want to over complicate it at the beginning.

Once Visual Studio has setup the project and is happy then it’s time to add in the required packages to use RavenDB. Most of the development will be done using just the client RavenDB functionality as I have a RavenDB server running locally however when I get close to deployment I will create it so that it can either run with a dedicated server instance or in an embedded way.

The NuGet packages which I have installed are the following:

<package id="RavenDB.Client" version="2.0.2261" targetFramework="net40" />

<package id="RavenDB.Client.MvcIntegration" version="2.0.2261" targetFramework="net40" />

These give us the basics required to get the data access rolling as well as a way to start profiling what is going on.

I’ve decided to go for the setup of having the DocumentStore instance setup in the Global.ascx.cs on Application_Start and then create a session on each action call via a BaseController and finalise at the end of the call of each action. After some Googling this seems to be a simple and common way to setup a RavenDB session, avoiding DRY situations and I read somewhere (can’t remember where) that creating a session is relatively inexpensive processing wise. If this changes or I find an issue with performance then I may need to come back to it.

The base controller is very simple …

public class BaseController : Controller

{

public IDocumentSession RavenSession { get; protected set; }

protected override void OnActionExecuting(ActionExecutingContext filterContext)

{

RavenSession = MvcApplication.Store.OpenSession();

}

protected override void OnActionExecuted(ActionExecutedContext filterContext)

{

if (filterContext.IsChildAction)

return;

using (RavenSession)

{

if (filterContext.Exception != null)

return;

if (RavenSession != null)

RavenSession.SaveChanges();

}

}

}

Now we’re ready to roll!

As always you can follow the progress of the project on GitHub. Any pointers, comments, suggestions please let me know via Twitter or leave a comment on this blog.

Thursday, 18 April 2013

My Journey with Asp.Net MVC and RavenDB – Introduction to Tournament

The idea of this journey is based on a number of reasons. The main two are to learn how to use and work with RavenDB, focusing the usage in an Asp.Net MVC context and the other is to re-work a site which has been live for the past 4 years which has had minimal change over that time and needs a refresh and admin functionality.

I have chosen to use RavenDB as the backend of this system due to the nature of how the data is stored. The site is a sporting results site so once an event has taken place the results will not change. They may need some tweaking while entering them but once in and confirmed they won’t change. Due to this a relational database I feel isn’t the best fit for the data. Reading and speed of reading the data is the main function. The current site calculates scores on the fly all the time where as this isn’t required due to the previous reason of once the results are in they’re in.

I have decided to post the code onto GitHub during the development so the world can see how I’m progressing. I will also be posting about what I have learnt, what issues I’ve come across and how I’ve over come them. Also I believe it’s a good way to show case of what I am capable of. This series won’t be a step by step walk through but I hope with looking at the code and the description of the main parts in the blog it should aid others in how to get up and running.

Any pointers, comments, suggestions please let me know via Twitter or leave a comment on this blog.

Let’s start the journey ….

Wednesday, 20 March 2013

View Driven Data Design; don’t let your view shape your data

Recently I have found myself coming across a couple of view to data scenarios where you can see that the view has driven the data model. This makes my spidey sense tingle as this strikes me as too tight coupling between data and view.

So what am I actually talking about? I’ve called it “View Driven Data Design”.

So an example to make things clearer; any developer who has been in the business long enough will have had the opportunity to work with a UI which involves category and sub category dropdowns / pickers. These values are usually driven by a data source (database, xml definition etc.) which are dynamic. What do I mean by dynamic? I’m referring to none hard coded values which require recompilation to be available. Either through an administration function, a nightly batch job updating the data or through a configuration file. These can have some cascading / restriction functionality built in to improve usability but are dynamic. These values can be stored by id – usually Foreign Keyed to the source – to allow for normalisation etc. This is fine for this scenario however there are times when this approach is overkill and can cause future issues and frustrations.

Imagine a scenario where you have an single data item (entity) which is modelled as a single class definition. An instance of this class requires classification. Unlike our earlier example the “category” is driven by a list of hard coded enum values. The UI designer has specified that on a specific value of category there will be another option to further categorise the entity. This is also driven by a distinct number of values in another enum. Further more there is a specific correlation between category and sub category options. So like before these values can be stored on two properties of the entity. What’s wrong with this I hear you ask? On the face of it nothing is wrong. It is however when you come to use these categorisations in other parts of the system when the problems creep in.

So you’ve created the instances in your CRUD section of the application and it’s all running fine however you now have another requirement to use them in a different form and you require to run some logic depending on classification – this is where the issue raises it’s ugly head.

When trying to classify an instance of the entity you now need to check different combinations of two properties. This involves the same business logic being put in, potentially, multiple places in the code base which is bad. If there are other values to check as well this adds unnecessary noise and complexity to the business logic. So how do we make the developers life easier and keep the UI designer happy at the same time?

It all starts at the data model level and answering “what value(s) do we actually have to store and how can this improve code clarity?”.

Start off by looking to see if the distinct list of defined combinations between the two enum sets can be merged into one set. If there are two many options then leaving them split will probably need to remain, however if the sub categories only apply to a small number of main categories then making one set may be on. This will need to be determined on an implementation case by case basis.

Right we have one property on the entity and the UI designer still wants their category and sub category dropdowns with visibility rules etc. This is where we need to loosely couple the data model away from the view. This is the exact reason why we have the concept of a view model. Using the entity data model we can define and populate a specific model to drive the view. In this case this will probably include like for like property mapping where nothing special needs to happen (eg. simple text values such as names) but for the single category enum it will need to get mapped to the two different options and can then drive the visibility rules etc. On the save action a conversion can map from the two options back into one ready to save.

This allows for the UI to be driven with the user experience the designer/analyst required while also making further business logic simpler and clearer to understand for the developer; both to write originally but also for future code maintenance.

So in the future when your designer wants fancy dropdowns and categorisations take a moment to ask does it need to be stored that way? Your future self will thank you instead of taking your name in vein.

Sunday, 20 January 2013

Hello World with KnockoutJs–Code Restructure

Recently I have been reading a number of articles and watching training videos from Pluralsight about Javascript and KnockoutJs. After reading the “Hello world, with Knockout JS and ASP.NET MVC 4!” by Amar Nityananda I wanted to see if I could apply the techniques of what I’d learnt to a relatively simple example. After reading the original article I couldn’t help but think “this Js could be better structured, I think I could do this” … so this is what I came up with.

To get an understanding of the problem please read the original article first.

I’ve only concentrated on the client side JavaScript as this was the challenge …

// setup the namespace

var my = my || {};

$(function () {

my.Customer = function () {

var self = this;

self.id = ko.observable();

self.displayName = ko.observable();

self.age = ko.observable();

self.comments = ko.observable();

};

my.vm = function () {

var

// the storing array

customers = ko.observableArray([]),

// the current editing customer

customer = ko.observable(new my.Customer()),

// visible flag

hasCustomers = ko.observable(false),

// get the customers from the server

getCustomers = function() {

// clear out the client side and reset the visibility

this.customers([]);

hasCustomers(false);

// get the customers from the server

$.getJSON("/api/customer/", function(data) {

$.each(data, function(key, val) {

var item = new my.Customer()

.id(val.id)

.displayName(val.displayName)

.age(val.age)

.comments(val.comments);

customers.push(item);

});

hasCustomers(customers().length > 0);

});

},

// add the customer from the entry boxes

addCustomer = function() {

$.ajax({

url: "/api/customer/",

type: 'post',

data: ko.toJSON(customer()),

contentType: 'application/json',

success: function (result) {

// add it to the client side listing, this will add the id to the record;

// not great for multi user systems as one client may miss an updated record

// from another user

customers.push(result);

customer(new my.Customer());

}

});

};

return {

customers: customers,

customer: customer,

getCustomers: getCustomers,

addCustomer: addCustomer,

hasCustomers: hasCustomers

};

} ();

ko.applyBindings(my.vm);

});

The main points I was trying to cover are:

- Code organisation through namespaces – I don’t want any potential naming conflicts. This isn’t a big issue in a small application but once they start growing it’s good to try and avoid putting definitions into the global namespace.

- Expose the details of the view model through the Revealing Module pattern – this keeps details clean as well as improved data binding clarity.

I haven’t gone into too much detail as the rest of the project infrastructure is very similar to the original article and can be found there. I wanted to post this as an example of how to make the Js structured.

Happy to discuss further; contactable in the comments or via twitter.

Updated Edit

After a comment from ranga I have read the link posted and update the code (see below). I have moved the view model and the Customer declaration outside of the on document ready event. I have also change hasCustomers to be a computed value. The interesting read about the performance hit about the events firing on array manipulation was really interesting – worth a read.

// setup the namespace

var my = my || {};

my.Customer = function () {

var self = this;

self.id = ko.observable();

self.displayName = ko.observable();

self.age = ko.observable();

self.comments = ko.observable();

};

my.vm = function () {

var

// the storing array

customers = ko.observableArray([]),

// the current editing customer

customer = ko.observable(new my.Customer()),

// visible flag

hasCustomers = ko.computed(function () {

return customers().length > 0;

}),

// get the customers from the server

getCustomers = function () {

// clear out the client side

this.customers([]);

// get the customers from the server

$.getJSON("/api/customer/", function (data) {

var items = new Array();

$.each(data, function (key, val) {

var item = new my.Customer()

.id(val.id)

.displayName(val.displayName)

.age(val.age)

.comments(val.comments);

items.push(item);

});

customers(items);

});

},

// add the customer from the entry boxes

addCustomer = function () {

$.ajax({

url: "/api/customer/",

type: 'post',

data: ko.toJSON(customer()),

contentType: 'application/json',

success: function (result) {

// add it to the client side listing, this will add the id to the record;

// not great for multi user systems as one client may miss an updated record

// from another user

customers.push(result);

customer(new my.Customer());

}

});

};

return {

customers: customers,

customer: customer,

getCustomers: getCustomers,

addCustomer: addCustomer,

hasCustomers: hasCustomers

};

} ();

$(function () {

ko.applyBindings(my.vm);

});

Keep the feedback coming, I’m always up for learning and improving.

Thursday, 15 November 2012

Software Bucket List–Nov 2012

After reading Rob Maher’s blog post about the concept of software bucket list it got me thinking.

Let’s take a step back for a minute; what’s a bucket list? A bucket list is a list of all the things you want to do before you pass away. So a software bucket list for a developer is a list of software you’d ideally like to write before you retire. As Rob says it can be anything, not learn this or learn that etc. but what would you like to do?

So this brings me back to me, his blog post got me thinking. The whole idea of the list is that it will evolve over time but as of November 2012 this is my list:

- Integrated disc golf membership system with result submission with associated automated renewals etc.

- Work for and write software for a Formula 1 team

- Multi player strategy re-write (modernise) of an old game we use to play in the early 00’s – Europa

- Build <insert description here> to enable working from home for my own company – idea still hasn’t been thought of yet.

The last one doesn’t quite fit but it’s more a target to keep me inspired about moving forward.

Anyway, this is just a start and now it’s a seed that’s planted I’m sure I’ll come up with more but for now what’s yours?

Thursday, 27 September 2012

Debugging WCF messages before serialization

Over the past couple of weeks I’ve been doing a lot of work with WCF to do with the messages being sent between client and server and securely signing them. Signing the message isn’t the subject of this post however developing the following technique to see the message before it’s sent did lead me onto the solution for debugging messages being sent between client and server.

This post is to show how you can intercept the message, update it once its been serialized into xml (or just look at the xml) but before it’s been sent to the server. The basis of this all down the the IClientMessageInspector and adding this to your client calling code.

Let’s take a look at the message inspector and then I’ll talk through the additional classes which go with the inspector and then finally I’ll show you how to use the message inspector with a WCF client proxy generated class; in both code and through configuration.

IClientMessageInspector

public class DebugMessageInspector : IClientMessageInspector

{

public object BeforeSendRequest(ref Message request, IClientChannel channel)

{

}

public void AfterReceiveReply(ref Message reply, object correlationState)

{

}

}

Above is the generated method signatures of which the IClientMessageInspector expects to be implemented. The naming of the methods describes exactly the point in which they are called; BeforeSendRequest is as the request is sent to the server, AfterReceiveReply is as the response is returned. On the way out this is executed after the message has been created from the strongly typed objects and on the way in it executes before any serialization is performed if using strongly typed proxy and class definitions generated from a WSDL.

Inside each of the methods you can call the following code to allow inspection of the message.

private Message Intercept(Message message)

{

// read the message into an XmlDocument as then you can work with the contents

// Message is a forward reading class only so once read that's it.

MemoryStream ms = new MemoryStream();

XmlWriter writer = XmlWriter.Create(ms);

message.WriteMessage(writer);

writer.Flush();

ms.Position = 0;

XmlDocument xmlDoc = new XmlDocument();

xmlDoc.PreserveWhitespace = true;

xmlDoc.Load(ms);

// read the contents of the message here and update as required; eg sign the message

// as the message is forward reading then we need to recreate it before moving on

ms = new MemoryStream();

xmlDoc.Save(ms);

ms.Position = 0;

XmlReader reader = XmlReader.Create(ms);

Message newMessage = Message.CreateMessage(reader, int.MaxValue, message.Version);

newMessage.Properties.CopyProperties(message.Properties);

message = newMessage;

return message;

}

The above is a very simple way of reading the message into a raw xml format and then converting it back into a Message for further processing from the framework.

So how do you hook the message inspector to the client proxy call?

You need an IEndPointBehavior.

IEndpointBehavior definition

The new end point behavior allows you to add, amongst other things, message inspectors to the current ClientRuntime of the client definition using the behavior.

Implementing the interface gives you the following structure:

public class DebugMessageBehavior : IEndpointBehavior

{

public void AddBindingParameters(ServiceEndpoint endpoint, BindingParameterCollection bindingParameters)

{

}

public void ApplyClientBehavior(ServiceEndpoint endpoint, ClientRuntime clientRuntime)

{

// add the inspector to the client runtime

clientRuntime.MessageInspectors.Add(new DebugMessageInspector());

}

public void ApplyDispatchBehavior(ServiceEndpoint endpoint, EndpointDispatcher endpointDispatcher)

{

}

public void Validate(ServiceEndpoint endpoint)

{

}

}

As you can see a number of extension hook points are available, but for this post we’ll concentrate on the “ApplyClientBehavior” method. In this method it gives you access to the MessageInspectors collection on the ClientRuntime which is a SynchronizedCollection on which any IClientMessageInspector implemented class instances can be added to.

This is it for the behavior definition. Lets see how we use it with a WCF client proxy class.

How do we use the IClientMessageInspector?

There are two ways in which the inspector can be used with a client proxy class. It can either be added in via code or through configuration. There are pros and cons for both routes. If done through code it will always be added, however a con is it will always be added. If however it is done through configuration it can be enabled/disabled without recompiling the code. This can be especially handy if the functionality is only for debugging and you don’t want it to be used during Production or it’s used to turn on/off certain functionality which is only applicable under certain circumstances.

Let’s take a look at using the message inspector first with code and then through configuration.

Using the IClientMessageInspector in code

Through code (example code can be found on GitHub) you add it to the Endpoint Behavior collection directly. This can be done either straight after the proxy definition or at any point before any method is actually executed.

var client = new ServiceReference1.Service1Client(); client.Endpoint.Behaviors.Add(new DebugMessageBehavior());

As you can see from the above it is instantiated calling a default constructor. At this point you can pass in constructor parameters however this will cause you issues if you want to move it to be bound in configuration later.

And that’s it. Straight forward; simple.

Using the IClientMessageInspector through configuration

Adding the behavior through configuration takes a bit more work but as stated earlier being able to attach the functionality purely through configuration without changing code is worth the extra effort. To attach through configuration we need to define a new BehaviorExtensionElement which describes our new behavior.

public class DebugMessageBehaviourElement : BehaviorExtensionElement

{

protected override object CreateBehavior()

{

return new DebugMessageBehavior();

}

public override Type BehaviorType

{

get

{

return typeof(DebugMessageBehavior);

}

}

}

Once this has been created and the solution built the binding configuration needs to updated as below:

<behaviors>

<endpointBehaviors>

<behavior name="debugInspectorBehavior">

<debugInspector/>

</behavior>

</endpointBehaviors>

</behaviors>

<client>

<endpoint address="http://localhost:60428/Service1.svc" binding="basicHttpBinding"

bindingConfiguration="BasicHttpBinding_IService1" contract="ServiceReference1.IService1"

name="BasicHttpBinding_IService1" behaviorConfiguration="debugInspectorBehavior" />

</client>

<extensions>

<behaviorExtensions>

<add name="debugInspector" type="Client.DebugMessageBehaviourElement, Client"/>

</behaviorExtensions>

</extensions>

This is all in the system.serviceModel node in your web/app config file. The important parts are highlighted in bold.

First off you have to define a behavior extension pointing at your newly created behavior element class. This is a reflection string so the fully qualified type name needs to be followed by the name of the assembly that contains it.

Secondly you create an endpoint behavior which now uses the newly created extension. At this point the intellisense in Visual Studio will complain however it’s fine.

Third and finally you specify the newly created endpoint behavior as the behaviorConfiguration for your client end point.

Result

Either way, on the way out you can see the whole message is:

<?xml version="1.0" encoding="utf-8"?>

<s:Envelope xmlns:s="http://schemas.xmlsoap.org/soap/envelope/">

<s:Header>

<Action s:mustUnderstand="1" xmlns="http://schemas.microsoft.com/ws/2005/05/addressing/none">http://tempuri.org/IService1/GetData</Action>

</s:Header>

<s:Body>

<GetData xmlns="http://tempuri.org/">

<value>7</value>

</GetData>

</s:Body>

</s:Envelope>

And on the way back:

<?xml version="1.0" encoding="utf-8"?>

<s:Envelope xmlns:s="http://schemas.xmlsoap.org/soap/envelope/">

<s:Body>

<GetDataResponse xmlns="http://tempuri.org/">

<GetDataResult>You entered: 7</GetDataResult>

</GetDataResponse>

</s:Body>

</s:Envelope>

Conclusion

Message inspectors are a very powerful tool when working with WCF and integration services. They can be used for debugging purposes as shown or for performing other actions on the message before it gets sent or on its return such as securely signing the message with a certificate.

The demo code I’ve used for this post can be found on Github; but remember it comes with a “Works on my machine” seal so use at own risk.

Sunday, 23 September 2012

Opening Pluralsight Single Page Application course code in VS2010

John Papa published a very good course on Pluralsight the other day about how to create a Single Page Application (SPA) and due to the popularity of the course Pluralsight opened it up for a short time only for free (thanks!). This allowed me to see exactly the type of quality I would receive when my training budget at work comes through soon (fingers crossed for October).

After watching some of the videos it was really interesting but I wasn’t quite getting how it all fitted together in the solution so was very pleased to find that the free sign up included being able to download the course material including the final solution. After downloading the zip file, expanding the contents and locating the .sln file I double clicked it and waited for Visual Studio 2010 to fire it up.

On Visual Studio 2010 opening the solution I was presented with a very sad looking solution explorer.

This is due to the fact that the course was written in Visual Studio 2012 and hence .net 4.5 was used for the projects. This got me thinking that it shouldn’t cause a problem as all the technologies which were being used are also available for .net 4 so I got about trying to get it to work.

Step 1; getting the projects to load

This in itself wasn’t that tricky. Firing up the .csproj files in notepad and locating the TargetFrameworkVersion I changed it to “v4.0”, saved and repeated it for all of the projects in the solution.

<TargetFrameworkVersion>v4.5</TargetFrameworkVersion>

To

<TargetFrameworkVersion>v4.0</TargetFrameworkVersion>

Once all the projects had been updated I reopened the solution in Visual Studio 2010 and got a prompt about configuring IISExpress. CodeCamper is configured to use IISExpress however if you don’t have it installed you will need to install it or configure the solution to work on a local IIS instance.

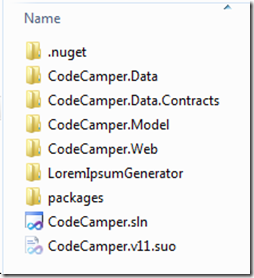

On clicking “Yes” Visual Studio attempted to load up the projects and Resharper did it’s usual solution scan and I was presented with a much happier looking solution explorer.

Step2; Lets try and build

On building the solution I got the following warnings however it did build.

warning CS1684: Reference to type 'System.Data.Spatial.DbGeometry' claims it is defined in 'c:\Program Files (x86)\Reference Assemblies\Microsoft\Framework\.NETFramework\v4.0\System.Data.Entity.dll', but it could not be found

warning CS1684: Reference to type 'System.Data.Spatial.DbGeography' claims it is defined in 'c:\Program Files (x86)\Reference Assemblies\Microsoft\Framework\.NETFramework\v4.0\System.Data.Entity.dll', but it could not be found

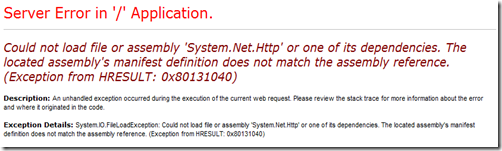

After a “successful” build I fired up the IISExpress site and went to start browsing the site to start seeing how it fitted together. However this was not the case and I got the following error.

Step3; changing 4.5 to 4

The changing of required framework version had not finished at the csproj level so I went through the web.config of the asp.net mvc project and changed the targetFramework attribute to “4.0”.

I also removed targetframework=4.5 from the httpruntime element below. Removed the machine key below that and hit refresh.

By now I was pretty sure that dependencies and reference errors are likely to be due to the framework version. With this I did a little Google and found that the version number had been bumped up to 4 for System.Net.Http in .net 4.5 however for .net 4.0 this needs to continue to reference version 2. With this in mine I updated the web.config from:

<dependentAssembly>

<assemblyIdentity name="System.Net.Http" publicKeyToken="b03f5f7f11d50a3a" culture="neutral" />

<bindingRedirect oldVersion="0.0.0.0-4.0.0.0" newVersion="4.0.0.0" />

</dependentAssembly>

to

<dependentAssembly>

<assemblyIdentity name="System.Net.Http" publicKeyToken="b03f5f7f11d50a3a" culture="neutral" />

<bindingRedirect oldVersion="0.0.0.0-4.0.0.0" newVersion="2.0.0.0" />

</dependentAssembly>

and hit refresh.

At this point I decided I would ignore the AntiXss encoder reference for now and come back to it later. I’m not going to publish the site anywhere so not worried about cross site scripting. However if you are going to put a site up into the wild then you will need to stop this kind of attack. Discussion for this is out of scope for this post.

I hit refresh and was presented with the Code Camper blue screen as below.

However no data.

step 4; getting data

At this point I thought I’m pretty close and pretty sure this will have something to do with the connection string. So after checking the connection strings configured in the web.config and noticing which one was currently active I checked to make sure the CodeCamper.sdf was present and ran.

Nothing; so changed the CodeCamperDatabaseInitializer below to always drop and recreate the DB to make sure the DB was fresh with the seeded data (remember to remove this and put it back to one of the other options otherwise it will delete everything all the time).

public class CodeCamperDatabaseInitializer :

//CreateDatabaseIfNotExists<CodeCamperDbContext> // when model is stable

//DropCreateDatabaseIfModelChanges<CodeCamperDbContext> // when iterating

DropCreateDatabaseAlways<CodeCamperDbContext>

Nothing. At this point I decided to remove the sdf file and update the connection string to use to be the SQLExpress one. I knew I had that installed and running so would be perfectly fine for study purposes.

Time to try running it again to see which error would occur next. The next error was inside the NInject Dependency Scope implementation, in the NinjectDependencyScope.GetService(Type serviceType) method …

… with the wonderfully descriptive error message of

“Method not found: ‘System.Delegate System.Reflection.MethodInfo.CreateDelegate(System.Type)”.

This was due to a version miss match of Ninject; uninstalling the Ninject nuget package and reinstalling it to get the correct version down seemed to resolve this.

Another rebuild and run. This time the EntityFramework was not happy with the current build. After the last issue with Ninject I thought I’d try the same routine so I uninstalled the EntityFramework nuget package and reinstalled it. It seemed to be originally wanting .net 4.5 but it’s happy with 4. This needed to be done in both the CodeCamper.Data and CodeCamper.Web projects.

At this point I hit f5 and crossed my fingers. I was greeted with a screen full of green friendly messages about getting data from the asp.net web api and a smiling photo of the mighty ScottGu; finally I’d got it working!

Conclusion

There is one out standing issue which was not resolved and that is around the AntiXss functionality but the rest seems to work as expected.

Thanks again to John Papa and Pluralsight for creating and publishing this course and I can’t wait to get my full subscription to start enjoying the other courses.